What Providers Need in 2026 - Safety, Bias, Privacy, and Accountability

Artificial Intelligence is no longer an experimental layer in healthcare technology. It is embedded in analytics dashboards, documentation workflows, predictive risk models, staffing optimization, and compliance monitoring. For IDD providers, Assisted Living Facilities, and long-term support organizations, AI is quietly shaping daily operations in ways that were unimaginable just a few years ago.

But as AI becomes more operational, a new reality is emerging: AI governance is the new compliance.

Healthcare organizations have always operated within strict regulatory environments. Documentation standards, Medicaid requirements, HIPAA protections, medication administration tracking, and incident reporting frameworks are deeply embedded in daily workflows. AI introduces a new dimension to this landscape. When algorithms influence decisions, flag risks, or automate processes, oversight must evolve accordingly.

The question is no longer “Should we use AI?”

The question is “How do we use it responsibly?”

Why AI Governance Matters Now

AI systems do not operate in isolation. They amplify the quality of the data and workflows they are built upon. In structured, disciplined environments, they improve visibility and efficiency. In inconsistent environments, they magnify weaknesses.

As AI influences service delivery, organizations must address new governance concerns:

- How accurate are AI-generated insights?

- Are predictive models introducing unintended bias?

- Who is responsible for reviewing automated recommendations?

- How is sensitive data being protected and used?

Without governance, automation can create blind spots instead of clarity.

AI Safety - Accuracy Before Speed

AI can analyze thousands of data points in seconds, but speed does not guarantee correctness. In IDD and Assisted Living settings, even small inaccuracies can have serious operational consequences.

For example, an incorrectly calibrated risk model could overstate behavioral concerns. A documentation summary tool could omit critical context. An automated workflow might escalate the wrong issue. These scenarios are not hypothetical - they are real risks in environments where service quality and compliance are tightly connected.

AI governance ensures that human review remains part of high-impact decisions. It reinforces that automation supports professional judgment rather than replacing it. Safety in AI-enabled systems means monitoring outputs, calibrating alerts, and maintaining clear oversight mechanisms.

Bias in Healthcare AI - The Hidden Risk

AI systems learn from historical data. If that data reflects inconsistencies, documentation gaps, or systemic bias, the outputs may reinforce those patterns rather than correct them.

In service-driven environments, bias may appear subtly:

- Disproportionate risk scoring across populations

- Misleading incident trend analysis

- Skewed quality metrics based on incomplete records

- Inconsistent performance assessments

Governance requires transparency into how systems operate and regular auditing of outputs. Organizations must remain aware that data reflects behavior. If documentation practices vary between teams or shifts, AI will mirror those variations.

For IDD providers in particular, person-centered support must remain central. AI should enhance individualized support planning - never override it.

Privacy and Responsible Data Use

Healthcare organizations are already deeply aware of privacy obligations. AI expands the scope of responsibility by increasing the volume and depth of data being analyzed.

Predictive insights may draw from behavioral trends, medication adherence patterns, staff activity logs, and incident history. While these insights can improve safety and compliance, they also require clear data boundaries.

Effective governance includes:

- Defined data access controls

- Transparent retention policies

- Secure system integrations

- Clear understanding of vendor data practices

Privacy is not only about storage security. It is about responsible use. Organizations must understand what data is being analyzed, how it influences recommendations, and who has access to those outputs.

Accountability - Defining Ownership

One of the most overlooked governance challenges is accountability. When AI surfaces a compliance risk or flags documentation inconsistencies, who owns the response?

If an automated alert is ignored, is that a system issue or a leadership issue? If predictive insights are available but not reviewed, where does responsibility lie?

AI governance requires defined ownership structures:

- Clear internal champions

- Leadership oversight of system usage

- Established escalation pathways

- Measurable operational standards

Without accountability, AI becomes background noise rather than a meaningful operational tool.

Governance in IDD and Assisted Living Environments

In hospital systems, AI governance often focuses on clinical algorithms and diagnostic models. In IDD and Assisted Living environments, governance has a different emphasis.

It centers on documentation integrity, medication accuracy, incident transparency, service continuity, and audit preparedness. AI in these settings must strengthen operational reliability rather than introduce complexity.

When governed properly, AI can help:

- Surface compliance gaps before audits

- Identify documentation inconsistencies in real time

- Highlight emerging risk patterns

- Support proactive oversight instead of reactive correction

In service-based industries, governance is about stability and trust.

Building an AI Governance Framework

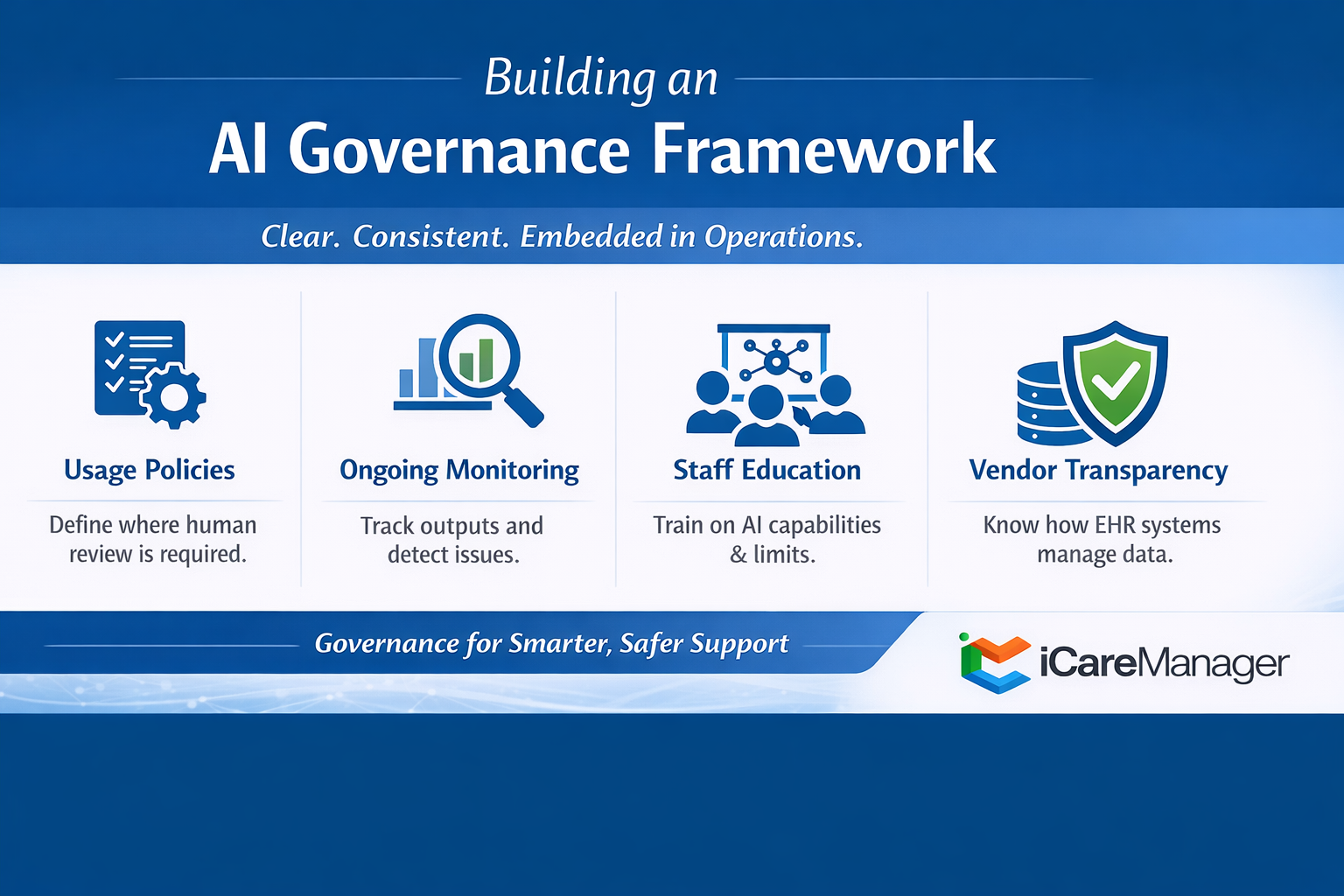

Governance does not need to be overly complex. It needs to be clear, consistent, and embedded into daily operations.

Strong AI governance includes defined usage policies that clarify when human review is required. It involves ongoing monitoring of outputs to detect inaccuracies or unintended trends. It requires staff education so teams understand both the capabilities and the limitations of AI-enabled systems.

Vendor transparency also plays a critical role. Organizations should understand how their AI-enabled EHR platforms manage updates, data handling, and algorithm improvements. Governance improves when technology reinforces standards rather than bypassing them.

AI Governance Is Continuous

AI readiness is not static. Regulations evolve. Staffing models shift. Technology improves. Governance must adapt accordingly.

Organizations that treat governance as a continuous discipline regularly review workflows, reinforce documentation standards, and use data proactively. They encourage frontline feedback and align leadership expectations with system capabilities.

In complex service environments, resilience depends on oversight. Governance ensures that innovation strengthens operations rather than destabilizing them.

Final Thought

AI will continue to transform healthcare operations. But transformation without governance introduces risk.

For IDD providers and Assisted Living operators, AI governance protects the individuals receiving services, the staff delivering support, and the organization’s regulatory standing. It ensures that automation strengthens trust instead of undermining it.

Safety.

Bias awareness.

Privacy protection.

Clear accountability.

These are not technical add-ons. They are operational necessities.

In 2026 and beyond, the organizations that lead will not simply be those with the most advanced AI tools. They will be the ones with the strongest governance - because in modern healthcare, governance is compliance.